Written by

Eloisa Mae

Reviewed by

Henry Dornier

Last updated

A call center agent scorecard is the key to showing you how to replicate success across your entire team. Here's what you need to know about building scorecards that work.

What is a call center agent scorecard?

A call center agent's scorecard tracks how well sales reps perform during customer calls.

Scorecards define what effective selling looks like in your contact center. They grade each rep against those standards.

What you track:

Hard metrics: conversion rates, talk time, appointments set

Selling skills: Objection handling, value communication, closing technique

Scorecards turn feedback like "improve your pitch" into concrete data. You show reps they're scoring 6/10 on objection handling but 9/10 on opening. That specificity makes coaching stick.

You might run the same training for everyone. You hire similar profiles. Yet performance gaps persist. Your sales floor has a handful of reps crushing quota while the rest hover at 60% attainment. That’s why you need the scorecards.

Agent scorecards reveal exactly what top performers do differently. They measure the behaviors that drive conversions. They track which skills separate closers from callers.

Sales leaders use scorecards to:

Spot coaching opportunities before they become quota misses

Maintain consistency across shifts and team leads

Connect rep behavior to revenue outcomes

Create fair, data-driven performance reviews

The best scorecards reveal what drives sales and what changes will boost conversions.

How to build a call center agent scorecard in 7 steps

Follow these steps to build a scorecard that measures what actually drives conversions in your call center, rather than what sounds good on paper.

Step 1: Define what "good" looks like for your team

Start with one question: what behaviors drive conversions in your specific sales motion?

Generic scorecards pulled from the internet measure what sounds important, not what actually closes deals in your vertical. A Medicare brokerage has different conversion drivers than a home services call center.

Start by talking to your top three reps and asking what they do differently on calls that convert, then pair that with a review of your highest-converting recorded calls to look for patterns in phrasing, pacing, and objection handling.

Key areas to evaluate:

Opening and qualification

Value communication

Objection handling

Closing technique

Process and script adherence

Pro tip: Pull this data before building criteria. Scorecards built on assumptions miss the behaviors that actually move revenue. Your best reps hold institutional knowledge that belongs in your scorecard, not in their heads.

Step 2: Choose your core metrics (quantitative and qualitative)

Build your scorecard around two types of metrics. Quantitative metrics show who needs coaching. Qualitative metrics show what to coach.

Quantitative metrics

📊 Metric | 🔍 What it measures | ⚠️ What low scores signal |

Conversion rate | The percentage of prospects who buy | Skills gaps or low lead quality |

average handle Time (AHT) | Time spent per call, including wrap-up | Long AHT + low conversion = spinning wheels. Short AHT + low conversion = rushing |

First Call Resolution (FCR) | Prospects who make a decision on the first call | Prospects are slipping through to follow-up calls that never close |

First Response Time (FRT) | How fast reps connect with inbound leads | Leads contacted after 30 minutes convert at far lower rates than those reached within 5 minutes |

Appointments set | Bookings made per rep per day | Weak qualification or unclear value communication |

Revenue per call | Conversion rate multiplied by average deal size | Reps may be closing volume but leaving money on the table |

Qualitative metrics

📊 Metric | 🔍 What to evaluate |

Opening strength | Did the rep build credibility fast and set a clear agenda? |

Objection handling | Did they acknowledge concerns, ask clarifying questions, and reframe into value? |

Value communication | Did they connect features to specific prospect outcomes rather than reciting specs? |

Closing technique | Did they create urgency at the right moment and handle final hesitations confidently? |

Script adherence | Did they follow the framework, including required disclosures and qualification steps? |

CSAT | What did the prospect report about the quality of the interaction after the call? |

Pro tip: Keep your initial scorecard to 5-8 criteria. Teams that track 15+ things at once can't focus on improving any of them. Nail the core metrics first, then expand.

Step 3: Set weights based on revenue impact

Not every metric matters equally. Weight each criterion based on how much it drives conversions in your business.

A Medicare brokerage might weigh script adherence and compliance checks at 30% because regulatory requirements are non-negotiable. A home improvement call center might weigh appointment setting at 40% since filling the pipeline is the primary goal.

Sample weighting framework:

Metric | Example weight |

Conversion rate | 30% |

Objection handling | 25% |

Value communication | 20% |

Closing technique | 15% |

Script adherence | 10% |

Adjust these percentages based on your sales motion. What improves deal flow in your team should get the heaviest weight.

Pro tip: Revisit weights quarterly. Market conditions shift, products change, and new objection patterns emerge. Your scorecard should reflect what's driving revenue today.

Step 4: Build your scoring rubric

Scores without definitions create inconsistency. One manager's "8" becomes another's "6."

Define exactly what each rating level looks like. Use a 1-5 or 1-10 scale per criterion, and document specific behavioral examples for each level.

Example rubric for objection handling (1-5):

5: Acknowledges the concern, asks a clarifying question, reframes into a value discussion, and moves toward a close with confidence.

4: Acknowledges the concern and provides a reasonable response. Maintains call momentum.

3: Addresses the objection but doesn't fully resolve the prospect's concern.

2: Gives a defensive response or backs off too fast.

1: Ignores the objection or becomes argumentative.

Apply this same specificity to every metric. Vague rubrics produce unreliable scores and unfair evaluations.

Pro tip: Build your rubric with your managers, not just leadership. Managers who help design scoring definitions score calls more consistently and defend them more confidently to reps.

Step 5: Decide how scoring happens

Three approaches exist for scoring calls. Each fits a different team size and budget.

Manual evaluation: Sales managers listen to recorded calls and score them against the rubric. Best for small teams (under 25 reps) or when you're testing a new scorecard. Time-intensive at scale.

Automated scoring: AI tools analyze calls in real time and flag behaviors against your criteria. Scales across hundreds of reps without adding manager hours. Misses the nuanced context that only humans catch.

Hybrid approach: AI automatically scores every call. Managers review flagged calls, high-value deals, and samples to verify accuracy. Gives you full coverage with human oversight where it counts.

Pro tip: If you're using automated scoring, set escalation rules. Calls that deviate significantly from a rep's average score, high-value deals, or new objection types should trigger a manual manager review.

Step 6: Build compliance into the scoring model

Regulated industries need compliance criteria built directly into the scorecard, not reviewed separately afterward.

For Medicare and insurance sales teams, this means scoring whether reps:

Read required recording disclosures on every call

Followed your qualification process in the correct order

Avoided making promises outside your approved product scope

Documented outcomes accurately in your CRM

Alpharun builds your compliance rules and coaching standards directly into the scoring model. You customize the criteria to match your business:

Simple criteria, like a recording disclosure to read on every call.

Complex criteria like your company's unique lead-discovery tactics.

Checks that reps followed your qualification process.

Monitoring how many deals were closed and how many of those met compliance rules.

That creates consistent, fair evaluations across reps, teams, and time, not compliance reviews that happen after something goes wrong.

Pro tip: SOC 2 Type 2-certified platforms matter here. If you're scoring calls that include sensitive health or financial data, your scoring platform needs to meet the same standards your business does.

Step 7: Pilot, calibrate, and refine

Don't launch scorecards across your entire sales floor on day one. Pick one team of 10-15 reps with a supportive manager. Run a 4-6 week pilot.

What to measure during your pilot:

Manager completion rate: Are evaluations being finished on schedule?

Rep engagement: Are reps reviewing their scores and asking questions?

Conversion impact: Are close rates trending up after coaching?

Time investment: Is the process sustainable for managers?

After the pilot, run a calibration session where all managers independently score the same 3-5 calls. Then compare results, discuss where scores diverged, and document what each rating level means going forward so everyone is working from the same standard.

Run calibration sessions monthly to prevent scoring drift. When two managers consistently score the same behaviors differently, your data loses value fast.

Pro tip: Gather rep feedback before you scale. Reps who help refine evaluation criteria are far more likely to trust the results and engage with coaching.

Mistakes to avoid when building a call center scorecard

These are the mistakes that turn a useful coaching tool into something reps resent and managers quietly stop using.

Mistake 1: Tracking too many metrics at once

When scorecards include 20+ criteria, managers spend more time filling out forms than coaching, and reps have no idea where to actually focus. Improvement requires prioritization, and you can't prioritize 20 things at once.

How to fix it: Start with 5 to 8 core criteria that directly connect to close rates. Get your team comfortable with those, then expand once scoring is part of your weekly rhythm.

Mistake 2: Using vague scoring definitions

Telling a manager to "rate objection handling 1 to 5" without defining what each level looks like produces inconsistent results. One manager scores a decent recovery as a 4, while another scores the same call as a 2. Your data becomes unreliable, and reps feel like evaluations are arbitrary.

How to fix it: Write out specific behavioral examples for every rating level on every criterion before you score a single call. Two managers reviewing the same call should consistently reach the same conclusion.

Mistake 3: Scoring without coaching

Scorecard data sitting in a dashboard does nothing on its own. The whole point of grading calls is to give managers a precise, evidence-based starting point for coaching conversations. Every evaluation should connect to a specific skill gap and a concrete next step.

How to fix it: Schedule regular one-on-one coaching sessions built directly around scorecard results. If you're collecting scores but not acting on them, you're producing numbers without producing behavior change.

Mistake 4: Weighting everything equally

Treating conversion rate and CRM documentation as if they matter equally is a fast way to build a scorecard that feels fair on paper but drives the wrong behaviors in practice.

How to fix it: Weight each criterion based on its actual revenue impact in your sales motion. What closes deals in your business should carry the heaviest weight, and that will look different depending on your vertical and sales cycle.

Mistake 5: Leaving FCR and AHT out of your metrics

First Call Resolution (FCR) and Average Handle Time (AHT) are two of the most revealing metrics in any sales scorecard, and a surprising number of teams leave them out entirely. FCR shows how often reps close on the first interaction.

Low FCR means prospects are slipping into follow-up cycles that rarely convert. AHT shows how efficiently reps use call time, and when tracked alongside conversion rate, it tells you whether reps are rushing or stalling.

How to fix it: Add both metrics to your scorecard from the start and always review them together. A rep with low AHT and low conversion is rushing. A rep with high AHT and low conversion is spinning their wheels.

Mistake 6: Focusing only on compliance and ignoring soft skills

Compliance criteria are non-negotiable, especially in regulated industries like Medicare and insurance. But scorecards that only check whether reps followed a script miss the behaviors that actually win deals, such as tone, empathy, active listening, and genuine rapport-building.

Reps can pass every compliance check and still lose the sale.

How to fix it: Include qualitative criteria that evaluate soft skills alongside compliance checks. Grade whether reps are building real connections with prospects, not just ticking boxes.

Mistake 7: Scoring too few calls per rep

Reviewing two or three calls a month gives you a snapshot, not a pattern. A rep can have a strong call on the day you review and a weak one every other day of the week. Decisions made on thin data produce unreliable coaching and unfair evaluations.

How to fix it: Aim for at least three evaluated calls per rep per week. If manual review at that volume is not realistic for your team size, consider automated scoring tools that cover every call and flag the ones that need manager attention.

Mistake 8: Only pulling out scorecards during discipline conversations

When reps only see their scores during performance improvement plans or formal reviews, scoring becomes something to fear rather than something to learn from. That association kills buy-in fast and makes reps defensive instead of receptive to feedback.

How to fix it: Share scorecard results weekly, celebrate score improvements publicly, and deliver specific coaching privately. Make it clear from day one that the scorecard exists to help reps close more deals.

Mistake 9: Not updating your scorecard as your business evolves

A scorecard built for your sales motion six months ago may not reflect what drives conversions today. New objection patterns emerge, products change, compliance requirements shift, and top performer behaviors evolve. A static scorecard quietly becomes less useful without anyone noticing.

How to fix it: Review your scorecard criteria and weightings at least once a quarter. Use your conversion data and coaching observations to identify what the scorecard is missing and what criteria are no longer pulling their weight.

Call center scorecard examples

Let's walk through three scorecard types you can adapt for your sales team:

1. QA monitoring scorecard

This scorecard evaluates call quality by reviewing recorded sales calls. You can do this through manual review or use automated tools.

Sample structure:

Opening and qualification (15%): Used an effective opener, asked qualifying questions, and confirmed the decision-maker

Value communication (25%): Articulated benefits with clarity, relevant examples, and connected to the prospect's needs

Objection handling (30%): Acknowledged concerns, asked clarifying questions, and reframed the conversation

Closing (25%): Created appropriate urgency, asked for commitment, and handled any final hesitations

Process adherence (5%): Followed script framework, documented in CRM, and scheduled a follow-up

Scoring: Each criterion is rated 1-5. Multiply by weights. Sum for total score. A rep scoring 4 on objection handling (30% weight) but 2 on closing (25% weight) gets a final score of 3.2/5.

2. Call center KPI scorecard

This scorecard tracks performance through metrics pulled from your dialer and CRM.

Sample metrics:

Conversion rate: Target ≥ 12% (40% weight)

Talk time: Target 8-12 minutes (15% weight)

Appointments set: Target ≥ 5 daily (25% weight)

Revenue per call: Target ≥ $45 (15% weight)

Follow-up completion: Target ≥ 90% (5% weight)

Scoring: Reps receive points based on how close they land to targets. Meeting all targets = 100 points. Missing the conversion target by 3 points might deduct 20 points from the final score.

3. Hybrid scorecard (quantitative + qualitative)

This approach combines automated KPI tracking with manual quality evaluation for complete visibility.

Sample structure:

Quantitative section (50% weight): Conversion rate, talk time, appointments set, revenue per call

Qualitative section (50% weight): Opening, value communication, objection handling, closing

Your system captures metrics from every call on autopilot. Sales managers score 3-5 sample calls per rep each week for quality criteria by hand. Both scores are combined into a single performance rating.

High-impact use cases for call center scorecards

Generic scorecards measure everything but fix nothing. Here's where focused scorecards actually move the needle on sales performance:

Tracking selling skills that drive conversions

Product knowledge is table stakes. Selling skills separate quota-hitters from chronic underperformers.

Scorecards quantify subjective elements like rapport-building, objection handling, and urgency creation. You can grade whether reps:

Build credibility in the opening 30 seconds

Ask questions that uncover buying motivation

Use stories and examples that resonate with specific prospect types

Create appropriate urgency without damaging trust

Two reps pitch the same product to similar prospects. One converts at 40%, the other at 15%. Selling skill makes the difference. Measure it to know who to coach and who should train others.

Identifying coaching opportunities before they become quota misses

Waiting until the end of the quarter to fix performance problems means months of lost sales. Your reps miss out on commission, and your team misses revenue targets.

Scorecards spot trends early:

A rep's closing scores drop over two weeks? Burnout or increased objections might be hitting them.

Many reps score low on the same thing? Your training failed to stick, or market conditions shifted.

Top performers share specific behaviors that others don't? You found best practices to scale across your team.

Set up alerts that trigger when performance drops. Say a rep's weekly average falls 10% or they score below 6/10 two weeks in a row. Their manager gets an alert with specific call examples and coaching tips on what to fix.

Aligning rep performance with revenue goals

Your company wants to grow revenue by 25% this quarter. Which rep behaviors will drive that growth?

Scorecards create the connection between individual actions and business outcomes. You can correlate:

Which selling skills predict high conversion rates

Whether faster talk time improves or hurts deal size

How objection handling quality relates to close rates

Which opening techniques book more qualified appointments

Your analysis shows reps who score 8+ on "using customer success stories" convert at 18%. Reps scoring 5-7 convert at 12% with the same products and pricing. Now you know what to coach.

Benchmarking performance across teams and time

Scorecards let you compare performance across:

Individuals (who's crushing quota, who needs support)

Teams (which shift or manager generates better results)

Time periods (are we improving month-over-month)

Products (which offerings close easiest)

This visibility reveals where to invest resources. Team A outperforms Team B despite similar lead quality and comp plans.

Team A's manager is doing something worth replicating. Performance drops every Friday afternoon. You might have a fatigue or scheduling issue affecting close rates.

Criteria for choosing the right scorecard approach

The wrong scorecard system creates more work than value. Choose based on these factors before committing:

Scalability

Your scorecard needs to work whether you're evaluating 10 reps or 500. Manual evaluation works for small teams but becomes impossible at scale.

How many calls can your managers review? You have 100 reps taking 80 calls daily. That's 8,000 interactions per day. Even reviewing 5% means evaluating 400 calls daily.

Can your scoring system handle growth? Adding reps, products, or locations should take minimal process changes. Spreadsheet-based scorecards break down fast. Look for platforms that automate score collection and reporting.

Integrations with dialers and CRM systems

Standalone scorecards create manual work. Your scoring system should connect to:

Dialer platforms so managers can review calls without downloading files or switching systems

CRM integration that connects performance scores to prospect data, deal stages, and conversions

Workforce management tools connect performance scores to scheduling, training, and capacity planning

Commission systems to tie scorecard results to compensation calculations

Cost and complexity

Budget matters. So does implementation time and ongoing maintenance.

Initial investment includes:

Software licensing or platform fees

Integration and setup costs

Training for managers and team leads

Time to build evaluation criteria and weights

Ongoing costs include:

Subscription or usage-based pricing

Manager time for manual evaluation

Calibration sessions and quality checks

System updates and maintenance

Start with a pilot program before committing to enterprise-wide rollout. Test your scorecard approach with one team for 4-6 weeks. Measure the impact on conversion rates and quota attainment. Scale what works.

Customization capabilities

No two sales floors operate the same. Your scorecard should adapt to your specific needs.

Evaluation criteria should reflect what drives conversions in your market and product category.

Weighting schemes should align with business priorities.

Scoring scales should match how your team thinks about performance: 1-5, 1-10, or pass/fail.

Reporting views show each role what they need. Reps see personal scores. Directors see team trends and the impact on the pipeline.

Off-the-shelf scorecards rarely fit your needs. Can you change evaluation forms without vendor help? Can you add custom metrics specific to your products or objection patterns? Can you create different scorecards for different product lines or sales motions?

Implementation strategy: Rolling out scorecards that work

Here's how to roll out your sales scorecard without triggering resistance or confusion.

Start with a pilot program

Don't launch scorecards across your entire sales floor on day one. Pick one team (10-15 reps) with a supportive manager. Run a 4-6 week pilot.

What to test:

Do your evaluation criteria measure what drives conversions?

Can managers complete evaluations in a reasonable time?

Do reps understand their scores and how to improve?

Does the data reveal actionable coaching opportunities?

Success metrics:

Manager completion rate (are they finishing scorecards on schedule?)

Rep engagement (are they reviewing scores and asking questions?)

Performance improvement (are conversion rates trending up after coaching?)

Time investment (is the juice worth the squeeze?)

Gather feedback from all stakeholders: reps, managers, team leads. Find what confuses them, what works, and what criteria need changes. Fix those issues before scaling to other teams.

Calibrate managers to remove scoring drift

Many managers evaluating calls means many interpretations of what "good selling" looks like. Without calibration, one manager's "8" becomes another's "6."

Run monthly calibration sessions:

All managers score the same 3-5 calls on their own

Compare scores and discuss discrepancies

Agree on specific examples of each rating level

Document decisions to maintain consistency

Create clear scoring rubrics. Instead of "rate objection handling 1-5," define what each level means:

5: Acknowledges concern, asks clarifying questions, reframes into a value discussion, and closes with success

4: Acknowledges concern, provides reasonable response, maintains momentum

3: Addresses objection but doesn't resolve prospect's concern in full

2: Defensive response or gives up too fast

1: Ignores objection or becomes argumentative

Build human oversight into automated systems

AI-powered scorecards can analyze every single call you make. But they miss the selling context that only humans can catch.

Set up escalation rules:

Scores that deviate from the rep's average by a wide margin trigger manager review

High-value deals or strategic accounts get manual verification

Unusual prospect objections get spot-checked

First instance of any new objection type gets the manager's evaluation

Create feedback loops: When managers disagree with AI scoring, document why. Feed that information back to improve the algorithm. This continuous refinement makes automated scoring more accurate over time.

Avoid common pitfalls that sabotage adoption

Measuring too much: Scorecards with 20+ criteria overwhelm managers and reps. Nobody can focus on improving 20 things at once. Start with 5-8 core criteria. Nail those. Then expand.

Scoring without coaching: Scorecard data needs to drive actual coaching conversations. Every evaluation should connect to specific skill development.

Punitive approach: Scorecards that only appear during PIP discussions create fear and resistance. Frame scoring as a development tool. Share both wins and improvement areas.

Ignoring rep input: Reps know which criteria feel fair and which feel arbitrary. Ask them to review evaluation forms before launch. When they help design the system, they're more likely to trust and engage with it.

Inconsistent frequency: Score 5 calls one week, then skip three weeks? You get no useful data. Pick a schedule like 3 evaluations per rep per week and stick to it.

No follow-up on scores: High scores need recognition. Low scores need coaching. Skip either one and you kill motivation. Celebrate improvements in public. Provide specific coaching in private. Tie scorecard performance to commission bonuses when it makes sense.

What tools help track call center scorecard metrics?

Here's what's available across different budget and complexity levels.

🛠️ Tool type | 💡 Examples | ✅ Pros | ❌ Cons | 🎯 Best for |

Spreadsheets | Excel, Google Sheets | Free, flexible, complete control | Doesn't scale, manual data entry, prone to errors | Teams under 15 reps testing scorecard criteria |

QA Platforms | Five9 quality management, NICE Quality Central, Calabrio | Standardized forms, manager calibration, automated reporting | Requires integration, licensing costs, IT support | Established sales centers with dedicated coaching teams |

CRM Integrations | Salesforce, Five9, Genesys built-in modules | Data flows on autopilot, unified reporting, no new system to learn | Limited QA features, requires platform expertise | Organizations wanting basic scorecard functionality in existing systems |

Build smarter call center scorecards to drive quota attainment

Call center agent scorecards measure what separates top performers from underperformers. They track the specific behaviors and metrics that drive conversions.

Start with 5-8 core criteria that match your revenue goals. Mix hard numbers with selling skills.

Hard numbers include conversion rate, talk time, and appointments set.

Selling skills include objection handling, value communication, and closing techniques.

Weight each one based on what improves conversions in your business. Forget what sounds impressive in leadership meetings.

Test it with one team for 4-6 weeks first. Get your managers calibrated each month so scores stay consistent. Then use those scores to spark real coaching conversations. Not another box to check on performance reviews.

Coach what actually closes deals

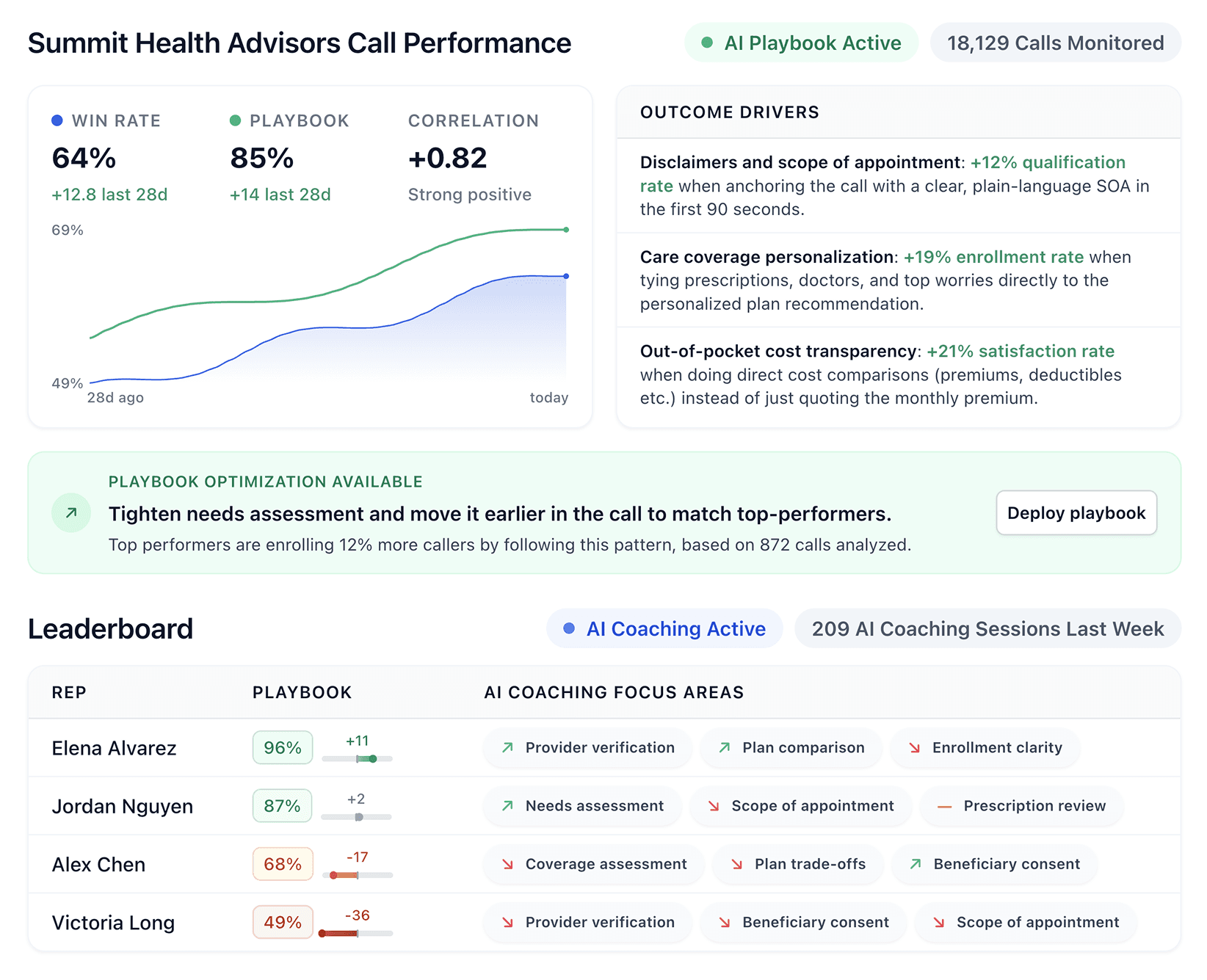

traditional call center agent scorecards track what you think matters. Alpharun tracks what actually works.

Your top rep closes at 22% while everyone else hovers at 12%. You know they're doing something different. But what? You can't tell what happens in those critical moments when deals close or collapse.

Alpharun analyzes every single call to find the exact behaviors that separate closers from callers. It catches the objection-handling techniques your best reps use. The specific phrases that build urgency without destroying trust. The timing patterns that turn hesitant prospects into buyers.

Then it teaches those same moves to your entire team. Your average performers start closing like your top 10% used to.

That's not guesswork. That's your own playbook, scaled across everyone. See how Alpharun turns your best reps' instincts into your entire team's playbook. Book a demo with Alpharun today.

Frequently asked questions

1. How do call center scorecards work?

Call center scorecards work by splitting each sales call into weighted categories, scoring each one on a 1 to 5 or 1 to 10 scale, and calculating a total performance score.

Categories typically include sales process adherence, selling skills like objection handling and closing technique, and efficiency metrics like conversion rate, AHT, and FCR. Scoring happens through manual manager review, automated AI analysis, or a hybrid of both.

2. How do you create a call center QA scorecard?

To create a call center QA scorecard, identify 5 to 8 criteria that drive conversions, weight them by revenue impact, and define what each rating level looks like with specific behavioral examples.

Mix quantitative metrics like conversion rate and AHT with qualitative factors like objection handling and closing technique, then pilot with one team before scaling.

3. How often should you update a call center agent scorecard?

Most teams update their call center agent scorecard quarterly, adjusting criteria based on market conditions, new products, or shifting performance data. Minor tweaks make sense any time you spot consistent scoring gaps or new objection patterns emerging across your team.

4. What is the difference between QA monitoring and a scorecard?

The main difference between QA monitoring and a scorecard is that monitoring defines the process (what to review, how often, and who evaluates), while a scorecard is the tool used during that review to apply criteria, weights, and ratings. You need both for an effective quality assurance program.

5. Can scorecards be customized for inbound vs. outbound calls?

Yes, call center scorecards should be customized for inbound and outbound calls because each sales motion requires different success criteria.

Inbound scorecards focus on qualification and needs discovery since prospects initiated contact, while outbound scorecards prioritize strong openers and objection handling since reps are reaching out first.

6. What metrics should a call center agent scorecard include?

A call center agent scorecard should include a mix of quantitative metrics like conversion rate, AHT, FCR, and First Response Time, alongside qualitative criteria like objection handling, value communication, closing technique, and script adherence.

Weight each metric based on its direct impact on revenue in your specific sales motion.