Written by

Zoë

Reviewed by

Paul Dornier

Last updated

Evaluating calls without a clear form leads to inconsistent feedback, confused reps, and coaching that doesn't stick. This guide covers what call center agent evaluation forms are, why they matter, and how to use them to improve performance at scale.

4 key benefits of call center agent evaluation forms

Evaluation forms matter for high-volume call centers because they create a consistent way to measure performance, connect behavior to results, and make coaching more actionable across every rep.

They create consistency across every rep

Evaluation forms give every manager the same lens when reviewing calls, keeping scoring aligned rather than drifting based on individual judgment. A strong call should be recognized the same way across the team because the underlying behaviors are clearly defined.

That clarity carries over to reps as well. When expectations are explicit, reps can focus on the behaviors that drive results rather than trying to interpret feedback that varies from one review to the next.

They connect behavior to outcomes

A well-structured evaluation form links what happens in the call to what shows up in the numbers. Managers can tie specific actions directly to outcomes like conversion or resolution.

Structured coaching programs can improve win rates by 28% and increase productivity by as much as 88%, showing how much impact clear, behavior-based feedback can have.

When reps see that a specific behavior leads to better results, feedback becomes something they can apply on the next call.

They make strong performance repeatable

High-performing reps often rely on instincts that are hard to explain but easy to recognize once surfaced. Ebsta’s analysis shows that in many teams, around 17% of reps generate 81% of revenue.

Evaluation forms capture what those reps do differently and turn it into something the rest of the team can follow, making strong performance repeatable instead of isolated.

They support compliance and reduce risk

In industries like Medicare and health insurance, every call carries compliance requirements that need to be followed consistently. Evaluation forms bring those checks into the scoring process, so required disclosures, approved language, and verification steps are reviewed alongside performance.

This keeps compliance tied to day-to-day execution, which reduces risk and makes it easier to maintain standards as call volume grows.

Best practices for creating effective evaluation forms

Effective call center agent evaluation forms are simple to use, directly tied to call outcomes, and applied the same way across all managers. When those three things are in place, scoring stays consistent, and feedback becomes easier for reps to act on.

Before getting into each principle, here’s a quick view of what matters most:

⚙️ Practice | 🔍 What it focuses on | 💡 Why it matters |

Start with a template | Structure and baseline | Reduces setup time and keeps scoring consistent |

Align with outcomes | Conversion, resolution, compliance | Ensures every question ties to real results |

Mix objective and subjective | Process + quality | Captures both execution and call quality |

Keep it focused | High-impact behaviors | Improves scoring consistency and usability |

Calibrate regularly | Manager alignment | Keeps scoring reliable across the team |

A strong evaluation form works because it reflects how calls actually happen and what actually drives results, so each of these practices helps move it in that direction.

1. Start with a template, then adapt it to your calls

A template gives you a starting structure that helps keep things consistent across managers, rather than everyone building their own version. The real value comes from shaping that structure around how your calls actually work.

What that looks like in practice:

Call flow: Does the form follow how your reps actually move through a conversation?

Product complexity: Are you capturing the moments where reps need to explain or clarify details?

Compliance requirements: Are required disclosures and steps built directly into the scoring?

What works for a Medicare sales call will look different from a home services inquiry, so the form needs to reflect those differences.

The closer the structure matches real calls, the easier it is to evaluate performance without forcing managers to translate between the form and the conversation.

2. Align scoring with business outcomes

Every item on the form should map back to something measurable, whether that’s conversion, resolution, or compliance. When the criteria drift away from outcomes, the form starts capturing activity that doesn’t change performance.

A useful way to pressure-test each question:

Does this tie to conversion or resolution?

Would coaching change if this score improved or dropped?

Does this reflect a behavior that shows up in winning calls?

If the answer is unclear, the criteria are likely adding noise. Keeping the form focused on outcome-linked behaviors makes scoring easier to interpret and gives reps clearer direction on what to improve.

3. Balance objective steps with call quality

Imagine two calls where both reps check every required box. The disclosure is read, the questions are asked, and the process is followed, yet one call converts and the other falls flat.

The difference usually comes down to how the interaction unfolds:

Objective steps: Was the disclosure read? Were the required questions asked?

Call quality: Did the rep sound confident? Did they handle pushback smoothly? Did the explanation actually land with the caller?

A form that combines both gives you a clearer view of performance. Objective checks keep scoring consistent and comparable, while qualitative criteria capture how well the rep handled the moments that influence whether the caller moves forward.

4. Keep the form focused on high-impact behaviors

More fields don’t lead to better evaluations. As forms grow longer, attention spreads thinner and scoring becomes less consistent, especially when managers are reviewing calls under time pressure.

Focusing on a smaller set of high-impact behaviors keeps evaluations sharper and easier to apply. When each section directly connects to outcomes, the form becomes faster to use and more reliable across different reviewers.

5. Calibrate scoring so results stay comparable

Even with a clear structure, differences in interpretation can show up over time. Regular calibration sessions help keep scoring aligned by giving managers a shared reference point for what each rating means in practice.

When multiple reviewers score the same call and compare results, gaps become visible and easier to resolve. That alignment is what allows evaluation data to be used confidently for coaching, comparisons, and trend tracking.

How to use call center evaluation forms for coaching

Using evaluation forms effectively comes down to turning scores into consistent, actionable coaching that reps can apply on their next call.

🧠 Coaching practice | 🔍 What it looks like | 💡 Example in practice |

Use them on a consistent cadence | Reviews happen on a fixed schedule | Manager reviews recent calls every Tuesday and flags 1-2 focus areas per rep before the next shift. |

Tie feedback to real call moments | Feedback is grounded in real calls | “At 1:32, the caller asked about coverage, and you moved to pricing without clarifying their needs.” |

Create a two-way feedback loop | Reps can respond and question scores | The rep questions a low objection score, and the manager replays the call to align on what a strong response should sound like. |

Track trends over time | Focus on repeated patterns, not single calls | Rep consistently misses follow-up questions across multiple calls, even though single-call scores vary. |

Turn insights into specific actions | Coaching ends with clear next steps | “On your next calls, pause after objections and restate the concern before responding.” |

Use them on a consistent cadence

When evaluations only happen after a bad call, they feel reactive and inconsistent. A weekly or biweekly cadence builds them into the team’s rhythm, so feedback becomes expected and easier to act on.

Reps perform more steadily when they know what’s coming and when.

Tie feedback to real call moments

General feedback gets ignored because it lacks context. Referencing exact moments in a call changes that.

Instead of pointing to a low score, show where the conversation shifted and why it mattered. Using timestamps and real examples makes the feedback concrete and easier to apply.

Create a two-way feedback loop

Coaching works better when it’s a conversation. Giving reps space to question or discuss their scores often surfaces gaps in how the criteria are applied.

It also builds trust in the process, since reps understand how decisions are made and where they can improve.

Track trends over time

Single calls rarely tell the full story. Patterns across multiple calls show where a rep consistently struggles or improves. Looking at trends helps managers focus on what actually needs attention and avoid overreacting to one-off results.

Turn insights into specific actions

Each session should end with a clear focus. Instead of summarizing performance, define one or two behaviors the rep will work on in their next calls. Specific actions are easier to execute and easier to measure in future reviews.

When evaluation forms are used this way, they become part of a continuous coaching loop, where feedback is consistent, grounded in real calls, and tied directly to how reps improve over time.

What to include in a call center agent evaluation form

A call center evaluation form should include the key phases of a call, with each section tied to behaviors that directly impact outcomes.

Core sections every form should cover

A strong evaluation form mirrors how a call actually unfolds, so each section lines up with a moment when the rep either moves the conversation forward or loses it. That structure makes scoring easier to apply and coaching easier to act on.

📊 Section | 🔍 What to evaluate | 💡 Why it matters |

Opening | Greeting tone, professionalism, required disclosures | Sets the direction of the call. A weak start often leads to lower engagement later. |

Discovery | Qualifying questions, understanding caller needs | Calls break down here when reps pitch too early without enough context. |

Explanation | Clarity, accuracy, connection to caller needs | Clear explanations reduce confusion and make decisions easier. |

Objection handling | Composure, how concerns are addressed | This is where top performers stand out, and conversion is won or lost. |

Close | Clear decision ask or next step | Calls without direction usually reflect earlier gaps in the conversation. |

Compliance | Disclosures, approved language, required steps | Protects both performance and regulatory risk, especially in Medicare and insurance. |

These sections map directly to where calls succeed or stall, so scoring across them gives managers clear coaching points tied to real moments in the conversation.

Scoring structure

The scoring method should be simple enough that managers can apply it consistently without second-guessing what the scores mean.

⚙️ Method | 📌 Best used for | 💡 Insight |

Yes/No | Compliance, required steps | Works when behavior is binary and easy to verify. |

1–5 scale | Tone, clarity, objection handling | Captures nuance where small differences affect outcomes. |

Weighted scoring | High-impact sections like close or compliance | Aligns scoring with what actually drives results. |

Consistency matters more than the method itself. When every manager scores calls the same way, the data becomes reliable enough to compare reps, track trends, and connect behavior to outcomes.

Common challenges with evaluation forms

Call center agent evaluation form challenges come from how they’re applied, which is why many teams see limited impact on performance. Gaps in coverage, consistency, and timing make feedback harder to trust and act on.

Limited call coverage

Most teams review only a small slice of total calls because there aren’t enough hours in the day. That narrow view depends on which calls were picked, while recurring behaviors across the rest of the dataset stay hidden.

As a result, it’s harder to tell whether performance reflects a real pattern or a few isolated interactions, which limits how confidently managers can coach.

Teams that evaluate a larger share of interactions often see customer satisfaction improve by 25% to 35% over time, highlighting how much insight is missed when most calls go unreviewed.

Time-heavy process

Manual reviews don’t scale cleanly as call volume grows. Each evaluation takes focused time, and as that time competes with other priorities, reviews start to lag or get compressed.

Over time, that pressure affects how thoroughly calls are evaluated and how consistently feedback is delivered across the team.

Inconsistent scoring

A rubric provides structure, but interpretation still varies in practice. Two managers can listen to the same call and weigh tone, phrasing, or objection handling differently based on their own judgment.

When those differences show up in scores, it becomes harder to compare performance across reps or use the data as a reliable baseline.

Delayed feedback

Timing plays a big role in whether feedback leads to change. When there’s a gap between the call and the review, the context around that interaction becomes harder to recall, and the same behavior may already be repeating.

Feedback that stays close to the call tends to be clearer, easier to connect to specific moments, and more likely to influence what happens next.

When call center agent evaluation starts to break down

Scaling call center agent evaluation as your team grows means moving beyond manual reviews and building a system that can keep up with increasing call volume.

As teams grow, evaluation forms don’t break because of design. The process around them struggles to keep up with call volume. Early on, manual reviews work. Managers can cover enough calls, give timely feedback, and track improvement with a clear view of performance.

As headcount and call volume increase, a few patterns start to show up:

Coverage drops: Managers can only review a smaller percentage of total calls

Feedback slows down: Coaching happens further from the actual interaction

Patterns stay hidden: Repeated issues across calls don’t surface quickly

At this stage, the challenge moves beyond the form itself. Even a well-built evaluation form loses impact when it’s applied to a shrinking sample of calls.

This is where evaluation becomes an infrastructure problem. Without a system that can handle volume, managers spend more time catching up than coaching, and performance starts to level off across the team.

Why evaluation breaks as teams grow, and how Alpharun helps

At a small team size, coaching works because managers can stay close to the calls. As the team grows, that starts to break down. Fewer calls get reviewed, feedback takes longer, and the same issues repeat across the team.

Even with a strong call center agent evaluation form in place, coverage drops as call volume increases, and it becomes harder to see what’s actually happening across conversations.

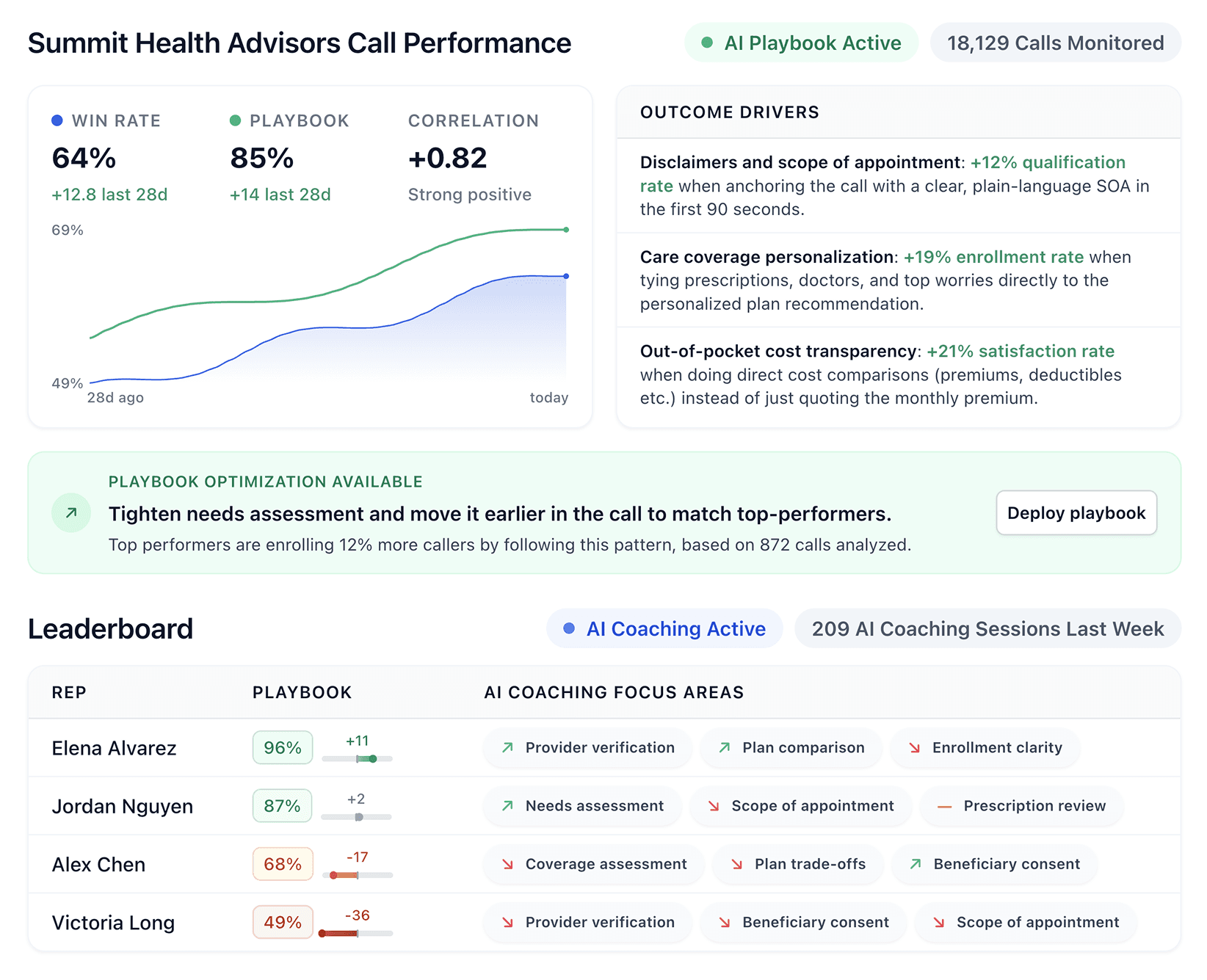

Alpharun evaluates every call against your framework, so coaching is based on complete data instead of a limited sample. It integrates with platforms like Five9 and Genesys, so most teams can get up and running in about a week without changing their existing workflow.

Here’s what becomes obvious once this is in place:

Every call is evaluated automatically: You’re no longer guessing based on a handful of calls. You can see how every rep performs across the full day

Recurring issues surface early: The same missed step or weak explanation shows up quickly, before it repeats across dozens of conversations

Top-performer behavior becomes visible: You can hear what your best reps do differently and spot those patterns across the team

Reps receive feedback tied to their own calls: Instead of general advice, they see the exact moment where the call shifted and what to do next time

Managers get a clear view of team-wide trends: Performance patterns are visible at a glance, without digging through calls one by one

What this really gives you is a balance most teams struggle to get. You have AI handling the heavy lifting across every call, while managers stay focused on coaching, context, and judgment where it actually matters.

If manual QA is falling behind, Alpharun keeps evaluation and coaching aligned with what’s actually happening on calls. Book a demo to see how it works on your team.

Frequently asked questions

What is a call center agent evaluation form?

A call center agent evaluation form is a structured scorecard used to assess how agents handle calls based on defined behaviors and outcomes like quality, compliance, and conversion.

What should be included in a call center agent evaluation form?

A call center agent evaluation form should include key call phases like opening, discovery, explanation, objection handling, close, and compliance, each tied to specific scoring criteria.

Why are call center agent evaluation forms important?

Call center agent evaluation forms are important because they create consistent scoring, connect rep behavior to outcomes, and make coaching more actionable across the team.

How often should call center agents be evaluated?

Call center agents should be evaluated weekly or biweekly to ensure feedback stays timely, relevant, and tied to recent call behavior.

How many calls should be reviewed per agent?

Call center teams should review as many calls as possible, but manual QA limits coverage. AI-based tools can evaluate 100% of calls and surface patterns for coaching.