A call center quality monitoring form gives call center teams a clean, consistent way to review calls and coach reps with precision. This template shows you how to score the moments that drive quality so that you can raise performance across the entire team.

Call center quality monitoring form template

🧩 Form section | 🎯 Purpose | 📋 Example scoring items |

1. Call opening | Start the conversation effectively | Greeting script, recording disclosure, name usage |

2. Needs assessment and verification | Verify identity and understand the customer's situation | Identity verification, request type, eligibility confirmation |

3. Call etiquette and professionalism | Evaluate tone, language, and composure | Tone of voice, pace, professionalism under pressure |

4. Hold and transfer procedures | Score protocol during holds and transfers | Permission before hold, wait time communication, transfer handoff |

5. Resolution or product explanation | Evaluate the clarity of explanation or resolution | Tailored explanation, plain language, customer comprehension confirmed |

6. Objection handling | Measure effectiveness when customers raise concerns | Acknowledged concern, specific response, tone maintained |

7. Compliance and process adherence | Track regulatory and company requirements | Required disclosures, CRM documentation, authorizations |

8. Call resolution and next steps | Score call close and post-call follow-through | Confirmed resolution, next steps explained, CRM documented |

9. Overall evaluation and coaching notes | Complete assessment and coaching guidance | IQS score, strengths noted, specific coaching recommendation |

Now let's break down what to include in each section and how to score it.

9 key sections for your quality monitoring form template

A strong quality monitoring form breaks each call into clear, measurable sections. It helps you spot coaching gaps and support the behaviors that help reps handle every interaction effectively. Use this structure to score performance:

Section 1: Call opening and rapport building

Score how effectively the rep starts the conversation and establishes credibility.

Evaluate whether the agent:

Used the proper greeting (company name, agent name, purpose of call)

Stated the call recording disclosure in clear terms

Asked for and used the customer's name

Confirmed the customer had time to talk

Set clear expectations for the call

Scoring approach: Rate each item as Yes/No or use a 1-5 scale. Include space for notes on what the rep did well and what they should improve.

Section 2: Needs assessment and verification

Verify that the rep gathered the information needed to understand the customer's situation and move the interaction forward.

Check that your agent covered each of these steps:

Verified customer identity following security protocol

Identified the nature of the request (support, complaint, inquiry, or purchase)

Asked all the required questions to understand the customer's situation

Listened actively and clarified unclear responses before moving forward

Confirmed account, case, or eligibility details before proceeding

For support teams: Confirm the rep covered ticket category, escalation triggers, and resolution authority before proceeding.

For sales teams: Add industry-specific qualification criteria. Medicare teams might track "confirmed current coverage details." Home services might include "assessed property conditions relevant to service.

Section 3: Call etiquette and professionalism

Score whether the rep maintained a professional, respectful tone throughout the entire interaction.

Your form should score whether the agent:

Used a calm, professional tone of voice

Spoke clearly and at an appropriate pace

Avoided interrupting the customer while they were speaking

Used polite, professional language at all times

Avoided filler words, slang, or overly casual expressions

Remained composed when handling frustrated or difficult customers

Scoring tip: Etiquette issues are easy to overlook on content-heavy calls. Score this section independently before evaluating what the agent said.

Section 4: Hold and transfer procedures

Score whether the rep followed proper protocol when placing a customer on hold or transferring the call.

For each call, confirm the agent:

Asked for permission before placing the customer on hold

Provided an estimated wait time before initiating the hold

Checked back within the committed time frame

Thanked the customer for holding upon returning to the call

Explained the reason for a transfer before initiating it

Introduced the customer and summarized the situation to the receiving agent

Confirmed the customer was comfortable with the transfer before proceeding

Scoring tip: Hold-and-transfer moments are high-risk points for customer dissatisfaction. A rep who handles the content of a call well but mishandles a hold or transfer can still leave the customer with a negative experience.

Section 5: Resolution or product explanation

Check how well the rep explained the resolution, product, or next steps to the customer.

Score the agent on whether they:

Tailored explanation to the customer's specific situation

Explained key benefits, options, or resolution steps clearly

Used plain language instead of jargon or technical terms

Provided specific examples, precedents, or relevant scenarios

Connected the explanation to the customer's stated concern

Confirmed the customer understood before moving forward

Scoring tip: Don't track whether they explained. Score whether the explanation addressed what the customer actually needed to hear.

Section 6: Objection handling

Measure the rep's effectiveness when customers raise concerns.

Note whether the agent:

Acknowledged objection without getting defensive

Asked clarifying questions to understand the real concern

Provided a specific response that addressed the objection

Confirmed the customer's concern and resolved it

Maintained positive tone throughout

For high-volume teams: Track which objections come up most often and which handling approaches work best. This data shows you where to focus coaching effort.

Section 7: Compliance and process adherence

Track regulatory requirements and company policies specific to your business.

At a minimum, confirm the agent:

Provided all required disclosures at the correct points in the call

Documented customer information accurately in CRM

Followed pricing and discount authorization procedures

Obtained necessary verbal agreements or confirmations

Completed required compliance steps for your industry

Customize this section completely to match your operation. Start with simple criteria like recording disclosures, build in your unique process requirements, and add checks to confirm that agents followed your verification steps.

That level of specificity is what separates forms that drive results from generic templates.

Section 8: Call resolution and next steps

Score how well the rep brought the interaction to a clear, complete close and followed through after the call.

Assess whether the agent:

Confirmed the issue was resolved, or next steps were agreed upon

Addressed final questions before ending the call

Explained next steps in clear, specific terms

Provided case or ticket reference number if applicable

Thanked the customer and ended the call respectfully

Documented the interaction in CRM immediately after the call

Completed all required post-call wrap-up tasks within the established time standard

For sales teams: Also score whether the rep asked for the sale and handled final objections effectively.

Key metric: For support teams, track FCR rate and CSAT scores. For sales teams, track conversion rates by rep and closing approach.

Section 9: Overall evaluation and coaching notes

Provide space for complete assessment and specific coaching guidance.

Record the following:

Overall call quality score (1-10 or percentage)

Top strengths demonstrated on this call

Primary area for improvement

Specific coaching recommendation for this rep

Follow-up action items

Share feedback with agents right away and make it specific. “Improve objections” doesn't tell anyone anything.

When the customer asks about price, use the value-comparison steps in your playbook instead of offering a discount. That's the difference between feedback that gets ignored and feedback that actually sticks.

How to customize this template for your team

When you customize this template, it measures the actions that matter most for your team.

1. Start with your top performers' calls

Don’t guess what to measure. Instead, review multiple calls from your top performers and look for the exact actions they take that others don’t.

Look for:

Specific phrases they use when handling difficult interactions

Question sequences that move conversations forward effectively

Transition points where they shift from discovery to resolution

Compliance approaches that satisfy requirements while maintaining momentum

These behaviors become your scoring criteria. Your form measures how well everyone else matches them.

2. Add industry-specific compliance criteria

Different operations need different compliance tracking. The criteria vary by industry, but the same logic applies to any regulated operation.

🏥 Medicare | 🛡️ Insurance | 🔧 Home services |

Scope of appointment confirmation | State licensing disclosure | Service area confirmation |

Plan comparison requirements | Premium calculation verification | Estimate validity period |

Enrollment period verification | Policy term explanation | Cancellation policy explanation |

Star rating disclosures | Underwriting requirements explained | Warranty terms disclosure |

Build your form around the regulations that govern your specific business.

3. Weight criteria by impact

Not all form items matter equally. Some behaviors have a strong impact on outcomes, while others make little difference to performance.

Assign point values based on importance:

High-impact items (proper verification, effective objection handling): 20 points each

Medium-impact items (rapport building, clear presentation): 10 points each

Lower-impact items (greeting script, call closing): 5 points each

This weighting focuses coaching on behaviors that actually drive results.

4. Include space for sentence-level coaching notes

The most effective coaching references specific call moments rather than vague feedback.

Add fields like:

"Key moment timestamp and what happened"

"What top performers do differently at this moment"

"Specific tactic to use on next call"

This precision helps reps improve faster because they see exactly what to change.

5. Use shadowing to calibrate your evaluators

A well-designed form still falls apart if evaluators interpret it differently. Shadowing helps align how scores are applied and keeps evaluations consistent.

Have two evaluators score the same call independently, then compare results

Where scores diverge, discuss why and clarify the criteria

Run sessions at launch, at onboarding, and once a quarter after that

Over time, this process builds a shared standard that no written rubric can fully replace.

Why even the best template has limitations at scale

A well-designed quality monitoring form gives you the right scoring criteria. But manual form-filling creates problems for high-volume call center teams.

Manual review doesn't cover enough calls

Managers can spot-check only a small number of calls each week for each rep. In a team of 50 reps making dozens of calls a day, manual review covers only a tiny fraction of the total volume.

This limited view makes it easy to miss patterns, coaching gaps, and compliance risks. By the time a problem surfaces through spot checks, the rep has already repeated the same mistakes across many calls.

Scoring stays subjective without consistency

Two managers score the same call differently because forms rely on human judgment. "Good rapport" means different things to different evaluators.

When scoring isn’t consistent, you can’t tell if a rep is improving. Reps also stop trusting the feedback, so the coaching doesn’t stick.

Forms capture snapshots, not trends

Manual forms give you individual call scores. They don't show you patterns across hundreds of calls that reveal coaching priorities.

Your afternoon shift might struggle with an objection your morning team handles well. Or a competitor changed pricing and only half your reps adjusted. You cannot spot these trends by reviewing ten calls a week.

Feedback arrives too late

Manual review means coaching happens days or weeks after the call. By then, reps forgot the conversation details, and the learning opportunity passed.

Reps need guidance while interactions stay fresh, ideally within 24 hours. Your staff members are 3.6 times more likely to feel motivated to do outstanding work when they get daily feedback vs. annually. Manual processes can't deliver that speed at scale.

These problems of limited coverage, inconsistent scoring, missing trends, and slow feedback don't go away with a better template. They go away when technology handles the scoring for you.

What makes an effective quality monitoring form

Building the form is the easy part. Making it an effective quality monitoring form is where most teams fall short. Here's what separates the ones that work:

Built around your actual process

The fastest way to find those tactics is to pull your top performers' calls and look for the patterns nobody wrote down. The best behaviors are often the ones that never made it into your training materials.

Different operations need different criteria. Your top performers use approaches tailored to your market, and your form should score agents on those real tactics instead of generic best practices.

Measures outcomes alongside behaviors

A strong form evaluates whether the rep followed your process and whether that process actually worked.

Did they apply your verification method correctly?

Did the customer stay engaged through key questions?

Did the conversation move toward a resolution?

Scoring execution and impact link coaching to outcomes rather than rule-following. It shows managers where reps build momentum and where they lose it. The Internal Quality Score (IQS) is the metric that makes that number concrete and comparable across your entire team.

How to calculate your Internal Quality Score (IQS)

Your IQS converts form results into a single trackable metric. The formula is:

IQS = (Total points scored / Total points possible) x 100

Weigh high-stakes items like compliance disclosures more than lower-stakes ones like greeting scripts. Set a minimum acceptable score (e.g., 80%) and a top-performer target (e.g., 95%) to give the number context.

Designed for your compliance requirements

Each industry has its own regulatory expectations. Healthcare requires specific disclosures. Financial services involve licensing rules. Telecommunications carries consumer protection requirements.

A good form uses these criteria in the scoring model. This helps keep calls compliant and aligned with your industry rules. It works better than a basic checklist.

Common mistakes when building a quality monitoring form

Even well-intentioned forms fail when built around the wrong assumptions.

Scoring without defined criteria: Two managers will rate the same call differently

Building a form too long to complete: Aim for 25 to 40 items for most operations

Weighting all criteria equally: A greeting script should never outweigh a compliance disclosure

Skipping calibration sessions: Without them, scoring drift is inevitable

Never updating the form: Review and update criteria at least once per quarter

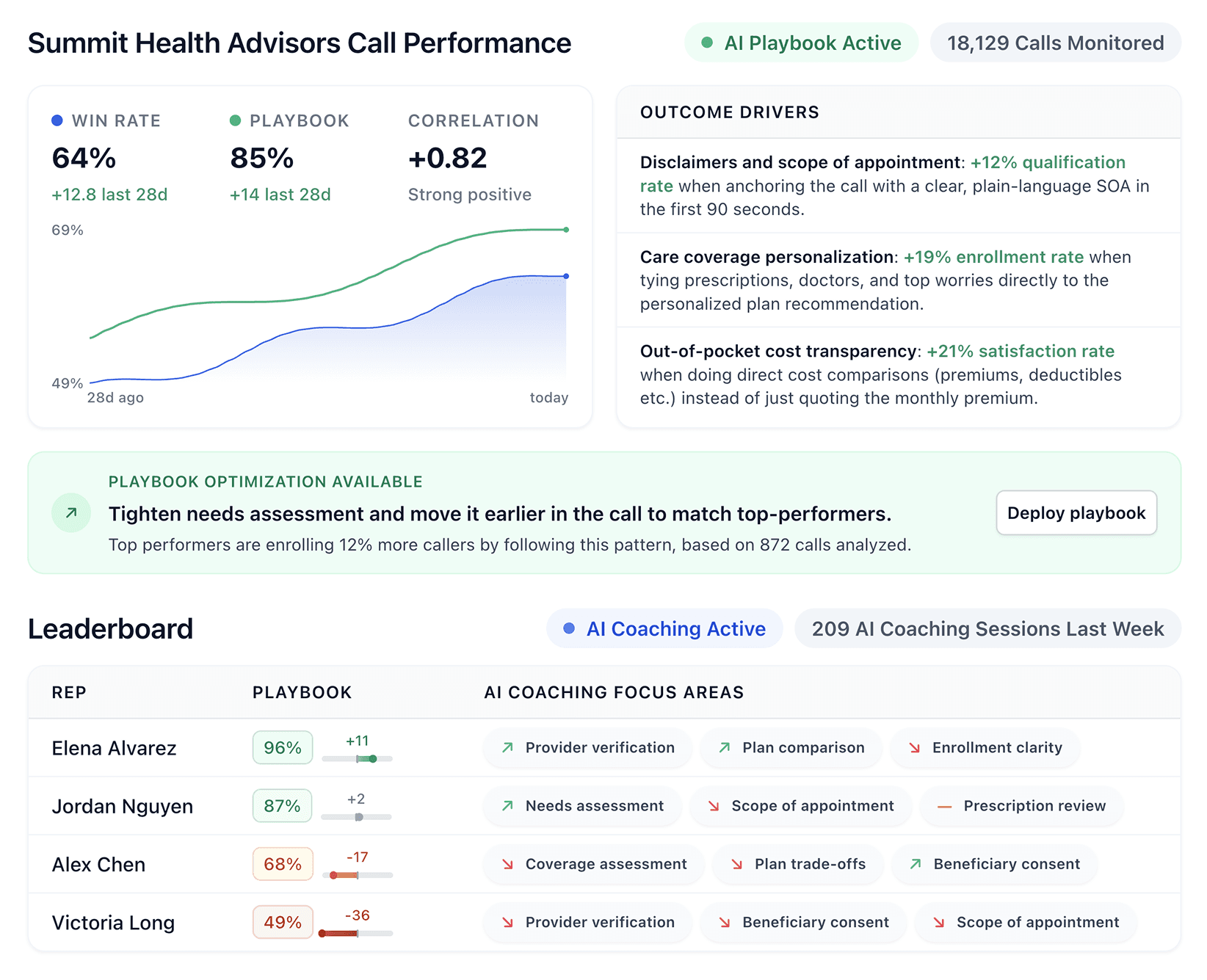

From static templates to intelligent quality monitoring

Teams still follow the same quality guidelines, only now the system scores each call for them. Your template works in the background on all calls, so spot checks become unnecessary.

Automated scoring across all calls

Quality-monitoring tools score each call against your exact criteria. This helps you spot patterns that manual reviews miss, like skipped steps, repeated issues, or early warning signs. Managers get a clear view of performance without spending hours listening to calls.

Custom scoring built from your winners

These tools review your top reps' calls and find the behaviors that move interactions forward. Your scoring criteria reflect those proven tactics rather than generic checklists. Evaluations stay uniform and fit your specific operation.

Sentence-level coaching delivery

The same analysis flags the exact moments where performance slipped. Coaching becomes specific, tied to real timestamps and phrasing. Reps receive short, clear coaching notes that cut down manager follow-up work and accelerate improvement.

Continuous improvement from real results

Things change quickly in your market, and great reps keep pace. The system detects these shifts and updates your scoring to match current success patterns, keeping your team aligned with the behaviors that win right now.

Tools like Alpharun apply this logic at scale, turning your quality form into a system that automatically scores every call.

Bring your form to life on every call

Your quality form shows what a great call should look like, but consistent execution requires more than spot checks. High-volume call center teams need every call to be scored the same way, and that's impossible to do manually at scale.

Alpharun turns your call center quality monitoring form into an automated scoring system that runs on every call. Here's what that looks like in practice:

Scores 100% of calls against your exact criteria, not a random sample

Flags coaching moments at the sentence level, so feedback is specific and immediate

Sends agents short coaching notes directly after calls to take work off the manager's plate

Tracks performance trends across your entire team, not just the calls managers happen to review

Stays compliant with SOC 2 Type 2 and HIPAA requirements for regulated industries

Your standards guide the system, and every agent gets evaluated the same way. Book a demo to see your form applied across your entire call volume.

Frequently asked questions

Who should fill out a call center quality monitoring form?

Quality monitoring forms are typically completed by QA analysts, team leads, or managers. In smaller operations, supervisors handle scoring directly. Whoever fills it out must apply the criteria the same way every time.

What's a good IQS score for a call center?

Most call centers set a minimum acceptable IQS of approximately 80% and a top performer target of 90-95%. Regulated industries like Medicare and insurance typically set higher minimums because compliance errors carry greater risk.

How often should a quality monitoring form be updated?

Update your form at least once per quarter. Markets shift, products change, and a form that never gets updated stops reflecting what actually drives results.

Should agents see their quality monitoring scores?

Yes, agents should see their scores. Sharing results builds accountability, but pair every score with specific coaching notes tied to real call moments. Scores without context don't change behavior.

What is the difference between quality monitoring and quality assurance in a call center?

The main difference between quality monitoring and quality assurance is scope. Quality monitoring evaluates individual calls. Quality assurance is the broader program that includes calibration, coaching, trend analysis, and process improvement across the entire team.