Managers struggle when agent performance feels subjective. This guide breaks down how to evaluate call center agent performance with data you can trust and act on.

How to evaluate call center agent performance

Evaluating call center agent performance means using a consistent process that combines call reviews, QA scoring, and performance metrics. Without this, evaluations become inconsistent, feedback gets delayed, and decisions rely on guesswork.

The 6 steps below build a structured approach your whole team can follow:

1. Set clear performance benchmarks

Define what good looks like before you evaluate anyone. Without a benchmark, scores are meaningless.

Your benchmarks should reflect your specific call type, product, and caller profile. A Medicare sales team has different performance standards than a home services team.

Start by pulling data from your top performers. What does their average handle time look like? How often do they convert? How do they score on QA? Those numbers become your baseline.

Once you have a baseline, set expectations at three levels:

Target: Where the average rep should aim

Strong: What a high performer consistently hits

Minimum: The floor below which a rep needs a coaching plan

This gives managers a shared language and gives reps a clear picture of where they stand.

2. Review real call recordings and transcripts

Research from Avoma shows that 75% of sales leaders don’t listen to sales calls, even when they have the tools, which creates a major gap in how performance is evaluated.

Call recordings show how reps actually handle conversations, while metrics only show the outcome.

When reviewing calls, focus on how each part of the interaction is handled:

Opening: Did the rep build rapport quickly?

Discovery: Did the rep ask questions before pitching?

Explanation: Was the product explained clearly and accurately?

Objection handling: Did the rep stay composed and redirect?

Close: Did the rep ask for the decision?

Transcripts help you move faster when you're reviewing at scale. You can scan for specific phrases, flag risky language, and identify patterns across dozens of calls without listening to every second.

The combination of recordings and transcripts gives you qualitative evidence to pair with your performance data.

3. Score calls with a consistent QA framework

A QA framework turns subjective call reviews into objective, comparable scores. Without it, two managers reviewing the same call will give wildly different feedback.

A strong QA rubric covers each phase of the call with defined criteria. Each item gets scored on a consistent scale, and the total reflects overall call quality.

Here's what a basic rubric might include:

📊 Category | 🎯 Example criteria |

Opening | Required disclosure read, greeting was professional |

Discovery | At least two qualifying questions were asked |

Explanation | Benefits explained without unapproved claims |

Objections | The rep addressed concerns before re-pitching |

Close | Rep asked for enrollment or the next step |

Compliance | No prohibited phrases, Scope of Appointment followed |

Calibration is critical. Run calibration sessions where managers independently score the same call, then compare. If scores diverge, refine the criteria until your team is aligned.

4. Track key metrics alongside call reviews

Managers often notice a drop in performance but struggle to explain what caused it, since metrics alone lack context and call reviews without data miss the bigger picture.

For example, First Call Resolution rates average just under 70% across the industry, which means a large share of calls still require follow-up, making it important to understand what happens during those interactions.

Reviewing both together links metrics to what actually happens in calls, giving managers clear direction on what to improve.

5. Compare agents and identify patterns

Once you have consistent scores and metrics, comparison becomes straightforward. You can see which reps are outperforming, which are struggling, and where the team as a whole has gaps.

An Ebsta analysis shows that around 17% of reps generate 81% of revenue, which highlights how much performance can vary across a team and why it’s important to understand what top performers are doing differently.

Focus on patterns across calls instead of isolated outliers:

Are certain reps strong on compliance but weak on close?

Are new hires struggling with a specific part of the call?

Are high performers doing something on discovery calls that others aren't?

These patterns tell you where to focus coaching and what to build into your playbook.

A note on fairness: Compare reps on the same lead source, shift, and call type where possible. Performance gaps look very different once you control for those variables.

6. Monitor trends over time

A single evaluation only shows a moment in time, while trends reveal whether a rep is improving, staying flat, or slipping over time.

ICF research shows that 70% of individuals report improved work performance after coaching, which highlights why consistent evaluation and follow-through matter.

Set a clear cadence, so reviews stay consistent and actionable:

Weekly: Review individual metrics for each rep to catch early changes in performance and flag issues before they grow

Monthly: Look at QA score trends and coaching progress to see if feedback is translating into better call execution

Quarterly: Revisit team benchmarks and reset goals based on overall performance and shifting targets

Upward trends after coaching show that your process is working and that reps are applying feedback in real calls. When performance stays flat or declines, even after coaching, it usually points to a deeper gap in skills, training, or call structure that needs closer attention.

4 metrics used to evaluate call center agent performance

Metrics fall into four categories, and each one captures a different part of how an agent performs on calls.

Looking at them together gives a more complete picture than relying on any single number.

📊 Category | 🔍 What it shows | 📊 Key metrics |

Call handling | How efficiently calls are managed | AHT, transfer rate, resolution time |

Customer experience | How well reps handle conversations and build trust | FCR, customer feedback, conversion quality |

Productivity | How reps use their time | Calls handled, occupancy, adherence |

Quality & compliance | How well reps follow standards | QA scores, script adherence, policy adherence |

1. Call handling metrics

Call handling metrics focus on how efficiently reps move through conversations without losing clarity or control of the call. Efficiency matters, but only when the caller leaves with a clear answer or next step.

The core metrics here include:

Average handle time (AHT): Measures total time per call, including hold and after-call work. The benchmark is around 697 seconds, but a low number paired with low conversion often signals rushed conversations rather than strong performance.

Transfer rate: Tracks how often calls are handed off. In sales environments, each transfer adds friction and increases drop-off risk, so higher rates often point to gaps in knowledge or ownership.

Resolution time: Measures how long it takes to fully address the caller’s issue. Longer times usually reflect unclear call structure or hesitation in key moments.

Taken together, these metrics show whether reps are in control of the call or reacting to it. When AHT is low but transfers are high or resolution time is dragging, the issue is rarely speed. It’s usually structure, confidence, or product clarity.

2. Customer experience metrics

Customer experience metrics shift the focus from efficiency to how the interaction feels for the caller and whether it leads to a confident decision.

Key metrics include:

First call resolution (FCR): Measures how often an issue is handled in one interaction, with benchmarks between 70 and 79%. Lower rates often point to gaps in clarity or confidence during the call.

Customer feedback: Captures the caller’s perspective, often through post-call surveys focused on clarity and helpfulness rather than general satisfaction.

Conversion quality: Connects experience to outcomes. Strong conversions tend to align with high FCR, low transfer rates, and consistent QA scores, while poor-quality conversions often lead to churn.

If callers need to follow up or leave unsure, something in the conversation is breaking down. FCR and feedback make that visible, often pointing to missed explanations, unclear next steps, or weak guidance rather than a lack of effort from the rep.

3. Productivity metrics

Productivity metrics look at how reps use their time across a full shift and whether that time translates into results.

These typically include:

Calls handled: Shows activity volume, but only becomes meaningful when paired with conversion rate and QA scores.

Occupancy rate: Measures how much time reps spend actively handling calls, with a healthy range between 75 and 85%. Lower suggests overstaffing, while higher often leads to fatigue.

Schedule adherence: Tracks how closely reps follow their assigned shifts. Staying above 80% helps maintain coverage during peak hours.

On their own, these numbers can push teams toward volume over quality. The real value comes from connecting activity to outcomes. High call volume with weak conversion or QA scores often signals wasted effort rather than strong performance.

4. Quality and compliance

Quality and compliance metrics focus on whether reps follow defined standards across every call, including those that aren’t manually reviewed.

These areas are worth paying attention to:

Call quality scores: Based on your QA framework, covering the full call from opening through close and compliance checks. Consistency in scoring is what makes this reliable.

Script and playbook adherence: Measures whether reps follow the expected structure. In regulated industries, this includes required disclosures and approved language.

Policy adherence: Covers detailed requirements like disclosures, restricted phrases, and eligibility checks. At scale, this often requires structured QA processes or systems that can evaluate every call.

These metrics protect both performance and risk. When adherence drops, it’s rarely isolated to compliance. It often shows up in lower conversion quality and inconsistent call outcomes, since reps are drifting away from what already works.

Common mistakes in agent evaluation and how to fix them

Even well-intentioned evaluation programs break down when common gaps go unaddressed. Effective evaluation depends on using the right signals and following through consistently.

1. Focusing only on speed

Problem: Teams often lean on AHT and call volume because they are easy to track, but those metrics can be gamed and often reward reps who rush through conversations without actually helping the caller.

How to fix it: Evaluate speed alongside outcomes like conversion and resolution, and use call reviews to understand whether shorter calls reflect clear communication or missed opportunities during key moments.

2. Ignoring call quality

Problem: Metrics capture what happened on the surface, but they leave out how the conversation unfolded, which is where most performance gaps actually show up.

How to fix it: Build coaching around real call examples by highlighting specific moments where the rep lost control, missed a question, or failed to guide the caller, then walk through what a stronger response would have looked like.

3. Inconsistent scoring

Problem: When managers apply different standards, QA scores become unreliable, making it difficult to compare reps or trust the evaluation process.

How to fix it: Create a shared scoring framework with clear criteria for each part of the call, then run regular calibration sessions where managers review the same calls and align on how those calls should be scored.

4. Reviewing too few calls

Problem: Looking at only a small number of calls creates a narrow view of performance, where patterns are easy to miss, and both risks and strengths remain hidden.

How to fix it: Increase the number of calls reviewed by using transcripts or automated tools to scan interactions at scale, so recurring issues and strong behaviors become visible earlier.

5. Delayed feedback

Problem: Feedback that comes days or weeks later loses its impact because reps can’t connect it to a specific moment, and by then the same behavior has likely repeated many times.

How to fix it: Shorten the feedback loop by tying coaching to recent calls, keeping it specific to what happened, and tracking whether the same behavior improves in the following reviews.

What strong agent performance looks like

Now that you’ve got a clear way to evaluate performance, you’ll start to see patterns in how your best agents operate across calls.

Consistent call quality

High-performing reps deliver the same level of execution on every call. They follow the call structure, complete required disclosures, and handle objections with control and clarity from the first interaction of the day through the last.

Consistency here signals that performance is repeatable, which makes coaching easier and results more predictable.

Balanced speed and accuracy

Top performers manage handle time without sacrificing clarity. Their efficiency comes from product knowledge and familiarity with the call flow, allowing them to guide conversations without hesitation or unnecessary steps.

When speed and accuracy align, calls move forward smoothly and outcomes improve without creating confusion for the caller.

Positive caller feedback

Strong reps leave callers with a clear understanding of what they are signing up for and why it fits their needs. Feedback tends to reflect clarity, confidence, and a sense that the rep listened and responded to the situation.

Clear communication reduces second calls, lowers friction, and supports higher-quality conversions.

Low escalation and transfer rates

High-performing reps take ownership of the call from start to finish. They handle questions directly, manage objections, and keep the conversation moving without relying on handoffs.

Lower transfer and escalation rates often point to strong product knowledge and confidence during key moments in the call.

Upward performance trends

Strong performance improves over time. High-performing reps apply feedback, adjust their approach, and show measurable progress in areas like QA scores, conversion, or objection handling.

Tracking these trends helps distinguish reps who are developing from those who are maintaining the same level without growth.

What ties this together

Each of these signals connects back to the same idea: Strong performance is consistent, measurable, and improving. When these patterns show up together, managers can coach with confidence and scale what works across the team.

Tools that support agent performance evaluation

The tools you use determine how much of your call center you can actually see and how quickly you can act on it. Most teams rely on a mix of recording, QA, and analytics tools to evaluate performance.

At a high level, these tools fall into four categories:

Call recording and transcription: Capture every interaction so managers can review real conversations instead of relying on memory or summaries.

QA and scoring tools: Provide structured rubrics to evaluate calls and track performance over time.

Performance dashboards: Surface trends across reps and teams, making it easier to spot gaps and areas for improvement.

AI-powered coaching platforms: Analyze calls at scale, identify patterns, and automate scoring and feedback.

As call volume grows, most teams move from manual reviews to tools that can evaluate a larger share of interactions and surface patterns faster.

To explore your options: See our breakdown of the top 10 sales coaching software tools, including Gong, Alpharun, and Balto, based on real testing and use cases.

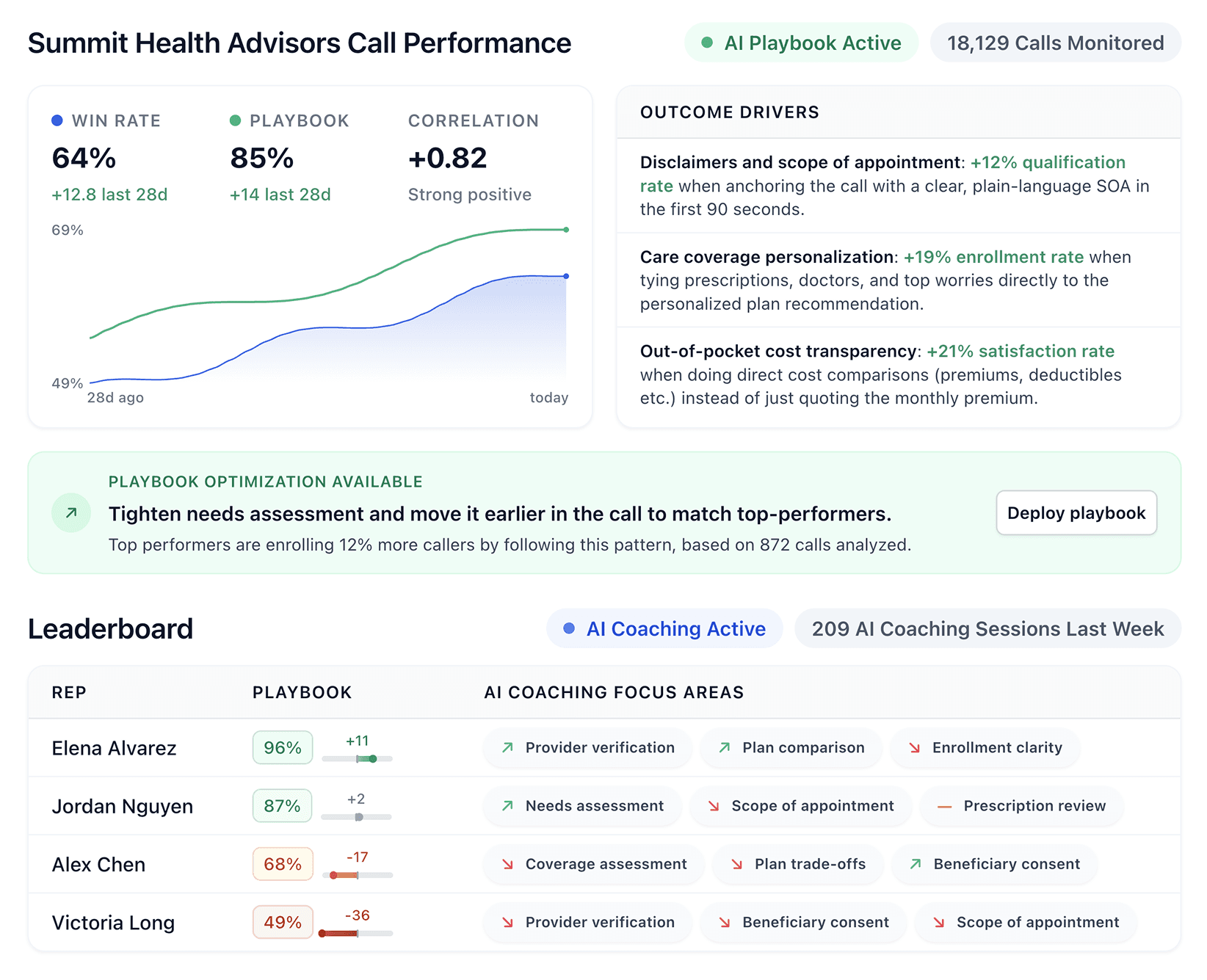

Where tools like Alpharun fit in

You can tell within a few calls that training only goes so far. Once a caller pushes back, the conversation drifts, and the rep loses control.

That’s where teams start to rethink how to evaluate call center agent performance, since call reviews only cover a small slice, and feedback often comes too late to change much.

Alpharun looks at every call instead of a sample, so the patterns that would normally take weeks to piece together show up in the first few days, and the behaviors that actually close deals can be built into something the whole team follows.

In practice, it looks like this:

Every call gets scored against your playbook, so evaluation is consistent and not dependent on who reviewed it.

Top-performer behaviors are surfaced across the dataset, making it clear what drives outcomes instead of relying on assumptions.

Those behaviors get turned into a shared playbook, so reps are working against the same standard.

Feedback is tied directly to each rep’s calls, which makes coaching specific and easier to act on.

Managers get a clear view of trends across the team without manually reviewing a large sample of calls.

Whether your team runs on Five9, Genesys, or another major platform, Alpharun integrates directly with what you already have and gets evaluation consistent across every call within a week.

If you want to see how this works on your own calls, you can schedule a demo and walk through how Alpharun surfaces performance patterns across your team.

Frequently asked questions

What is a call center agent performance evaluation?

Call center agent performance evaluation is the process of measuring how well agents handle calls, follow your playbook, and drive outcomes like conversions. It combines performance metrics and QA scoring to give a complete view.

What’s the difference between QA scoring and performance metrics?

The main difference between QA scoring and performance metrics is that metrics measure outcomes, while QA scoring evaluates behavior during the call. You need both to understand what happened and why.

How many calls should you review per agent per week?

Teams should review as many calls as possible, but manual QA limits coverage to a small sample. AI-powered tools can review 100% of calls, making it easier to identify patterns and coach effectively.

Can you evaluate agent performance without call recordings?

No, you can’t fully evaluate agent performance without call recordings. Metrics show results, but recordings reveal what actually happened during the call.

What is the most important metric for call center agent performance?

The most important metric depends on your goal, but conversion rate and call quality score together give the clearest view of performance in sales environments.